HTAP Data Network: Background, Status

< GEO AQ CoP < HTAP Data Network Pilot

The HTAP Network is a connectivity infrastructure in support of the HTAP TF activities. The goal of the network is to facilitate seamless flow of model and observational data between the participants of the HTAP Program. The HTAP Data Network Pilot is a demonstration of the standards-based interoperability among key nodes in the HTAP Network and its nodes constitue the core of and expanding AQ CoP Data Network.

Evolution of the AQ Data Network

The current (2010) Network has evolved since about 2005. At the first HTAP meeting in Brussels, June 2005, R. Scheffe emphasized three tasks for supporting the HTAP objectives: (1) Model evaluation; (2) Linkage of remote and land based observing systems and (3) Integration of data and information systems. At the January 2006 HTAP meeting in Geneva, K. Torseth has reviewed the state of observations and identified key impediments for integrating the existing heterogeneous monitoring and data systems. At the same meeting, R. Husar and R. Scheffe presented an outline for Interoperable Information System of Systems for HTAP based on the service oriented architecture of GEOSS. The potential for data integration was further illustrated at the October 2007 HTAP meeting at FZ Jülich through an ad hoc group presentation on HTAP Network: Application Examples for NOx Analysis at FZ Jülich. At the Paris HTAP meeting, June 2009, Martin Schultz and co-workers have reported on the practical aspects of enhancing data connectivity of modeling results using standards-based interoperability protocols. The early focus of the data networking was on information architecture and technical implementation issues i.e. creating the the cyberinfrastructure. At the 2010 HTAP meeting in Brussels, Husar reported on the need and experiences with the AQ Communities of Practice (AQ CoP) as the means of connecting and enabling the human aspects of networking.

Design of Distributed Computing Systems

Service Oriented Architecture of the AQ Data Network

Traditional data processing, integration, and model evaluation is performed using dedicated software tools, handcrafted for specific applications. Such closed 'stovepipe' data systems may work well for specific applications but data sharing and distributed, collaborative application building is hampered by the rigid architecture (form and function) of the data systems.

The AQ Data Network is envisioned as a connectivity infrastructure (cyberinfrastructure) to allow the flow and integration of observational, emission and model data.

The operations that prepare the integrated datasets and then create new, derived datasets, can be broken into distinct services that operate on data streams. Each service is defined by its functionality and by its service interface. In principle, the standards-based interface allows linking of service chains using formal work flow software. The main benefit of such a Service Oriented Architecture (SOA) is the ability to build agile and adoptive application programs that can respond to changes in the data input conditions, processing operations and output requirements. In other words, following the SOA approach, not only the data providers but also the processing services can be distributed and executed by different participants. This flexible approach to distributed computing allows the distribution of labor, the easy creation of different processing configurations, and the reuse of data and processing resources.

The network can be further enhanced by re-usable tools that can empower the data providers and the data users. As a result of the 'network effect', overall value created by the emergent network can be may times that of the sum of individual contributions.

SOA is an implementation framework of the System of Systems, i.e. the design framework of GEOSS. Each service can be operated by autonomous providers and the "system" that implements the service is hidden behind the service interface. Combining the independent services (systems) into a functioning composite system constitutes System of Systems. ..

Relationship to other "Integrating Initiatives"

The Earth observations and modeling of hemispheric transport is currently pursued by individual projects and programs in North America, Europe and elsewhere. These constitute autonomous systems with well defined purpose and functionality. The key role of the Task Force is to assess the contributions of the individual systems and to integrate those into a system of systems. Both the data providers as well as the HTAP analysts-users will be distributed. However, they can be connected through an integrated infrastructure.

, as well as a set of user-centric tools to empower the collaborating analysts. This network will not compete with existing tools of its members. Rather it will embrace and leverage those resources through an open, collaborative federation philosophy. Its main contribution is to connect the participating analysts, their information resources and their tools.

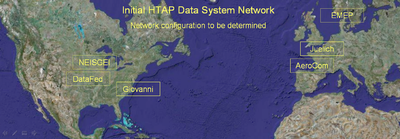

Figure 2.1 Initial (DataFed, Juelich) and planned (Giovanni, AeroCOM, EBAS) nodes of the HTAP Data Networks.

HTAP Network Pilot Nodes

An important (incomplete) initial set of nodes for the HTAP information network already exist as shown in Figure 3. Each of these nodes is, in effect, is a portal to an array of datasets that they expose through their respective interfaces. Thus, connecting these existing data portals would provide an effective initial approach of incorporating a large fraction of the available observational and model data into the HTAP network. The US nodes DataFed, NEISGEI and Giovanni are already connected through standard (or pseudo-standard) data access services. In other words, data mediated through one of the nodes can be accessed and utilized in a companion node. Similar connectivity is being pursued to the European data portals Jülich, EMEP and others. A connection to the AeroCom data system is being pursued.

This Pilot study was conducted by developing and implementing the web services at key network nodes, each of which provided different kinds of interoperable data access services. The primary data server for the initial offerings was hosted by FZ Juelich which provided access to a large array of model outputs used in the HTAP program. The NILU EBAS server includes an integrated data set of surface observations that was prepared for the HTAP program. A test server was implemented at NILU to test the methodology for accessing point monitoring-station monitoring data. The Pilot Network also included the NEISGEI server which offered global emissions data through a standard interface. The DataFed server was the initial test bed for the development of the WCS server software. DataFed offered standards-based access to multiple data sets including gridded satellite data, point station monitoring data, model outputs. DataFed also included a client software which facilitated testing to all the nodes in the HTAP Network Pilot.

The HTAP Network Pilot also includes server nodes that are not directly connected to the HTAP network. The Giovanni data system replicated the model data from the FZ Juelich server and also offered gridded satellite data along with analysis and visualization services. A number of additional mediated data servers were also included in the pilot network. These servers offered the data in a computer accessible manner on the Internet, but the access procedure was not complaint with the WCS protocol. For these servers, the mediation was performed in DataFed where the offered data sets were made accessible through the WCS protocol using wrapper software components. These servers included RSIG, VIEWS and other servers. The servers participating in this pilot are described briefly below along with links to the respective server home pages and literature references.

FZ Jülich

1 paragraph description to be completed by Juelich

References and Links:

HTAP Wiki: http://htap.icg.fz-juelich.de/data

HTAP Data access and visualisation user interface: http://htap.icg.kfa-juelich.de:50080/

MACC Data access and visualisation user interface: http://macc.icg.kfa-juelich.de:50080/

CF Checker: http://repositories.icg.kfa-juelich.de/hg/CFchecker/

Complete software tool to test conformance of netcdf output files with CF standard (“CF checker”)

CF Data Tools: http://htap.icg.fz-juelich.de/hg/CFdatatools

Develop operator for interpolation of model results from hybrid pressure. Develop operator for interpolation of model results from hybrid pressure vertical coordinate system to user-defined pressure levels.

DataFed - Federated Data System

DataFed: (1) facilitates the access and flow of atmospheric data from provider to users, (2) supports the development of user-driven data processing value chains, and to (3) participates in specific air quality application projects. The federation currently mediates access to over 100 near real-time and historical observations and model datasets. The server side of DataFed as a mediator uses non-intrusive 'wrappin' to provide WCS standards-based access to distributed datasets on the internet. Since 2004, DataFed has provided IT support to a number of air quality management applications. Its evolution is recorded on the DataFed Wiki. DataFed is now an applied system used in everyday research by several air quality analysis groups.

References and Links:

http://www.delicious.com/rhusar/interoperability+mediator

(IEEE Paper DataFed and GEOSS, 2008) http://datafedwiki.wustl.edu/images/8/85/IEEE_SysJournalGEOSS_DataFed.pdf

The Users and the GEOSS Architecture V - Denver 06 Proceedings http://www.grss-ieee.org/menu.taf?menu=geoss&detail=GEOSSWorkshops&conferenceid=39&pageid=24

The Workshop Series “The User and the GEOSS Architecture” - OGC site. http://www.ogcnetwork.net/GEOSSdemos

Denver Demo - OGC site http://www.ogcnetwork.net/node/137

IGARSS06 Denver Symposium Main Page, July 31 - Aug. 4, 2006 http://www.igarss06.com/index.html

IEEE, GEOSS Workshop Main Page The * User and the GEOSS Architecture V", Applications for N. America http://www.grss-ieee.org/menu.taf?menu=GEOSS&detail=include&html=GEOSSArchitectureV

The User and the GEOSS Architecture (PDF) - Background/Architecture of Workshop Series http://www.grss-ieee.org/files/The_User_and_the_GEOSS_Architecture.pdf

Workshop Agenda http://wiki.esipfed.org/images/b/b8/Agenda_Arch_Workshop-_NA_r6.doc

NEISGEI Emission

1 paragraph description To be completed.

References and Links:

NILU - EBAS

1 paragraph description to be completed by NILU-EBAS

References and Links:

Other Nodes: AQS, RSIG, VIEWS

The core nodes in the HTAP Data Network Pilot are those that serve observations or models using standard interfaces. The Pilot network also includes mediated server nodes that make their data accessible through the Web but not through a web service protocol. For these data servers, Datafed acts as a mediator be providing a WCS web service interface to the data using WCS wrapper- adapter interface.

AQS

RSIG

VIEWS

ECCAD

End of October 2011, Michael Decker from Juelich went to Toulouse in order to implement a Community WCS server for the ECCAD emissions database. After solving some issues on the technical side and enhancing the metadata of a sample emission data file, the connection could be successfully established [1] and a link was made to the Juelich web interface [2] (de-select button "Hide catalogues that are not CF compliant" and choose the "ECCAD test server" catalogue). Michael identified a couple of technical and semantic issues which need to be addressed before ECCAD could come fully online:

- the WCS server requires some information in the netcdf files which go beyond the bare necessities of being CF-compliant. Michael suggests to add a WCS specific set of tests to the CF-checker tool to make it easier to see if a file could be delivered through the Comunity WCS server or not (and to provide feedback what needs to be changed)

- some institutions block the non-standard ports and are rather strict about this. We should explore the option to use the normal http port for the WCS server and interface.

- the server should allow for direct access to the (SQL?) database of ECCAD to avoid the intermediate step of exporting a netcdf file from ECCAD and then delivering it through WCS.

- Juelich will open the interface software to the community, but this requires some initial re-structuring and preparations (for which we need a person first)