WCS Access to netCDF Files

Back to Interoperability of Air Quality Data Systems

Link to WCS_NetCDF_Development

Introduction

The purpose of this effort is to create a portable software template for accessing netCDF-formated data using the WCS protocol. Using that protocol will allow accessing the stored data by any WCS compliant client software. It is hoped that the standards-based data access service will promote the development and use of distributed data processing and analysis tools.

The initial effort is focused on developing and applying the WCS wrapper template to the HTAP global ozone model outputs created for the HTAP global model comparison study. These model outputs are being managed by Martin Schultz's group at Forschungs Zentrum Juelich, Germany. It is hoped that following the successful implementation at Juelich, the WCS interface could also be implemented at Michael Schulz's AeroCom server that archives the global aerosol model outputs.

It is proposed that for the HTAP data information system adapts the Web Coverage Service (WCS) as the standard data query language. The adoption of a set of interoperability standards is a necessary condition for building an agile data system from loosely coupled components for HTAP. During 2006/2007, members of HTAP TF have made considerable progress in evaluating and selecting suitable standards. They also participated in the extension of several international standards, most notably standard names (CF Conversion), data formats (netCDF-CF) and a standard data query language (OGC Web Coverage Service, WCS).

Recent Addition, July 16th 2010, adds implementation of the point data as a possible data type. If the data is collected in regular intervals from a network of stations and is stored into a SQL database, the implementation provides templates to configure WCS interface.

The netCDF-CF Data Format

The netCDF-CF file format is a common way of storing and transferring gridded meteorological and air quality model results. The CF convention for structuring and naming of netCDF-formated data further enhances the semantics of the netCDF files. Most of the recent model outputs are conformant with netCDF-CF. The netCDF-CF convention is a key step toward standard-based storage and transmission of Earth Science data.

The netCDF-CF data format is supported by a robust set of well-documented and maintained low-level libraries for creating, maintaining and accessing data in that format for multiple platforms (Linux, Windows). The low level libraries provided by UNIDATA also offer a clear application programing interface (API). At the server side, the libraries can be used to create and to subset the netCDF data files. At the client side, the libraries allow easy access to the transmitted netCDF contents. Thus, both the data servers and the application developers are enabled by the robust netCDF libraries.

The existing names for atmospheric chemicals in the CF convention were inadequate to accommodate all the parameters used in the HTAP modeling. in order to remedy this shortcoming the list of standard names was extended by the HTAP community under leadership of C. Textor. She also became a member of the CF convention board that is the custodian of the standard names. The standard names for HTAP models were developed using a collaborative wiki workspace. It should be noted, however, that at this time the CF naming convention has only been developed for the model parameters and not for the various observational parameters.(See Textor, need a better paragraph). The naming of individual chemical parameters will follow the CF convention used by the Climate and Forecast (CF) communities.

The netCDF CF data format is most useful for the exchange of multidimensional gridded model data. It was also demonstrated that the netCDF format is well suited for the encoding and transfer of station monitoring data. Traditionally, satellite data were encoded and transferred using the HDF format. The new netCDF version 4 (beta) library provides a common API for netCDF and HDF-5 data formats.

Multiple Servers, Multiple Clients, connected with WCS protocol

Interoperability can be achieved via a good standard, one that addresses the important issues and is not too complicated to implement. WMS has achieved this goal, and WCS can achieve it too.

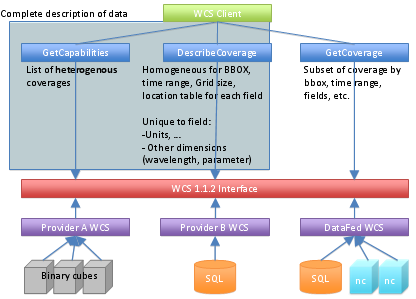

High level view of WCS protocol

- With GetCoverage and DescribeCoverage queries the client can get all the metadata, so that GetCoverage query can be made without guesswork. The client can zoom in to the desired are time, and parameter, without searching.

- WCS Client can be anything that understands WCS 1.1.2: a human with web browser, data consuming application, datafed viewer.

- The most important standard here is the return data format: Regardless where the data is stored in netCDF-CF Files, netCDF files with other convention, binary cubes, SQL DBMS or other, the return data is formatted according to precise standard. For grids it is CF 1.0 or later, for points CF 1.5 stationTimeSeries.

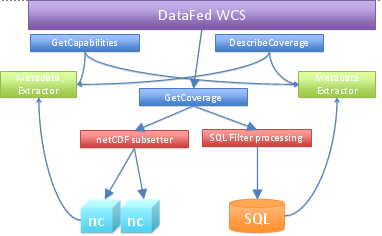

Architecture of datafed WCS server

Queries, Metadata Extractors, Subsetters, Backends

- Backends, the system supports 2 data storages.

- netCDF-CF for grids, access library written by Unidata.

- Any SQL DBMS for points.

- They are nothing else, just plain data. For SQL there's no requirement for a standard schema. Adding SQL views you can configure the database to match an existing access component, and if that's not possible, a custom access component can be written.

- Metadata Extraction

- For netCDF-CF the built-in utility reads though all the files and stores all the metadata to a file for fast retrieval.

- If there was a CF-like convention for SQL DB schema, it would be possible to extract also point metadata automatically. Currently the hand-edited configuration just lists all the metadata: coverages, fields, dimensions, keywords, datatypes, units and access information. If the data is stored in netCDF CF 1.5 stationTimeSeries format, automatic extraction is possible.

- GetCoverage

- For netCDF the extractor subsets the file and returns exactly same kind of file with all the metadata, dimensions trimmed according to the filters and with only selected variables.

- With points using SQL as backend the WCS query is turned into an SQL query.

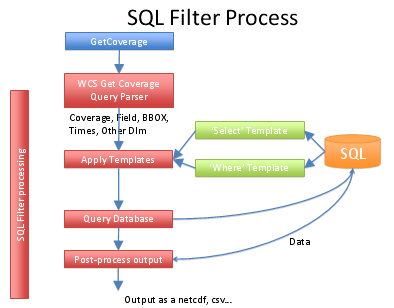

Processing of custom SQL database

- The query is parsed. The GetCoverage query is parsed and the result is a query object, with filters for each dimension in an easy to access format. This is a generic operation, not implementation specific.

- The select templates are generated. The simplest way to create a template is to create a view to the DB, which lists all the result fields: loc_code lat, lon, datetime, data field, data flags. In this manner the template is simply the field name, and the engine can just write select loc_code, lat, lon, [datetime], dewpoint from dewpoint_view. If there is no such view, then the template must contain the data table and the field name, so that a proper join can be generated.

- the filter templates are generated. Again, if there is a flat view to the data, the engine can just write where [datetime] between '2008-05-01' and '2008-06-01'

- Now the SQL query is ready, and can be executed, resulting a bunch of rows.

- These rows are then forwarded to a post-processing component, that writes either netCDF, CSV, XML or whatever the user requested.

Reports

HTP Network Project: June-July 2009 Progress Report by CAPITA

The initial effort was focused on developing and applying the WCS wrapper template to the HTAP global ozone model outputs created for the HTAP global model comparison study. These model outputs are being managed by Martin Schultz's group at Forschungs Zentrum Juelich, Germany.

In May, June and July 2009, WUSTL and FZ Juelich have collaborated on the implementation of the WCS service wrapper for the model data curated in Juelich. The WUSTL data wrapper code has been ported to server at Juelich. Following several iterations the code has been debugged. However, there were several inconsistencies have been found that required reconciliation. The co-development of the WCS wrapper code was facilitated by a shared programming environment with automatic code synchronization of the developing code.

Michael Decker at the Juelich group has added significant improvements and adaptations to the wrapper code that made it consistent with the Juelich model netCDF files. As an outcome of this first phase, a test model dataset has been pubished on the Juelich server. Subsequently, the dataset was successfully registered on DataFed and accessible for browsing and further processing.

In July, contact was also established with Greg Leptoukh at NASA Goddard, for expanding the WCS - netCDF implementation to the satellite datasets served through the Giovanni node of the HTAP data sharing network. In particular, the Giovanny Team was invited to participate in the collaborative development and testing of the WCS services. The open collaborative workspace for this HTAP Netork effort is accessible through the ESIP Wiki http://wiki.esipfed.org/index.php?title=WCS_Access_to_netCDF_Files

Evaluation of Juelich WCS: August 2009 Report by Giovanni

The focus of this study is to evaluate and compare WCS standards used by the Open Geospatial Consortium (OGC), Juelich, DataFed, and Giovanni. A minimum set of WCS standards is to be developed in conjunction with Juelich and DataFed. Click on Giovanni's current Media:Minimum Set WCS Standards.doc.

Juelich’s “HTAP server project” monthly progress report (July 2009) provides the following URL for accessing the Juelich server: http://htap.icg.kfa-juelich.de:58080? Michael Decker provided instructions to explore Juelich’s “HTAP_monthly” test dataset. The following are sample calls to:

GetCapabilities

http://htap.icg.kfa-juelich.de:58080/HTAP_monthly?service=WCS&version=1.1.0&Request=GetCapabilities

DescribeCoverage

http://htap.icg.kfa-juelich.de:58080/HTAP_monthly?service=WCS&version=1.1.0&Request=DescribeCoverage&identifiers=ECHAM5-HAMMOZ-v21_SR1_tracerm_2001

and GetCoverage

http://htap.icg.kfa-juelich.de:58080/HTAP_monthly?service=WCS&version=1.1.0&Request=GetCoverage&identifier=ECHAM5-HAMMOZ-v21_SR1_tracerm_2001&BoundingBox=-180,-90,180,90,urn:ogc:def:crs:OGC:2:84&TimeSequence=2001-01-01/2001-06-01&RangeSubset=*&format=image/netcdf&store=true

The Juelich WCS test server may also be accessed through the DataFed client (http://webapps.datafed.net/datafed.aspx?dataset_abbr=HTAPTest_G). A sample GetCoverage URL may be obtained by selecting the “WCS Query” button:

http://webapps.datafed.net/HTAPTest_G.ogc?SERVICE=WCS&REQUEST=GetCoverage&VERSION=1.0.0&CRS=EPSG:4326&COVERAGE=NCAS_vmr_no2&TIME=2001-05-01T00:00:00&BBOX=-180,-90,180,90,0.996998906135559,0.00460588047280908&WIDTH=10000&HEIGHT=10000&DEPTH=1&FORMAT=NetCDF

Sample calls for non-HTAP Giovanni data are as follows:

GetCapabilities:

http://gdata1.gsfc.nasa.gov/daac-bin/G3/giovanni-wcs.cgi?SERVICE=WCS&WMTVER=1.0.0&REQUEST=GetCapabilities

DescribeCoverage:

http://gdata1.gsfc.nasa.gov/daac-bin/G3/giovanni-wcs.cgi?SERVICE=WCS&WMTVER=1.0.0&REQUEST=DescribeCoverage&COVERAGE=MYD08_D3.005::Optical_Depth_Land_And_Ocean_Mean

GetCoverage:

http://gdata1.gsfc.nasa.gov/daac-bin/G3/giovanni-wcs.cgi?SERVICE=WCS&WMTVER=1.0.0&REQUEST=GetCoverage&COVERAGE=MYD08_D3.005::Optical_Depth_Land_And_Ocean_Mean&BBOX=-90.0,-45.0,90.0,45.0&TIME=2007-04-07T00:00:00Z&FORMAT=NetCDF

Action Item [Giovanni/Juelich/DataFed]: Use the same CRS URN (Uniform Resource Name) in all server and clients. There are two major inconsistencies in the current WCS server/client implementations. The first being the incorrect axis order for latitude and longitude. The first axis of EPSG:4326 is latitude, not longitude (see EPSG 6422 for the Ellisoidal CS definition). Thus, when this CRS is used, the bounding box values should be listed as "minLat,minLon,maxLat,maxLon". The second is the exact location of bounding box values. WCS version 1.1.x explicitly states bounding box values define the locations of grid POINT, not the edge of a grid cell. Thus the WGS 84 bounding box for a data set of 180-row by 360 column with 1 degree spacing is "-179.5, -89.5, 179.5, 89.5", NOT "-180,-90,180,90". The later will require that the data have 181 rows and 361 column. This interpolation should also apply to WCS V1.0.0, although it is not explicitly stated in the v1.0.0 specification.

Action Item [Giovanni]: Change WMTVER keyword to VERSION on the server side.

Action Item [Giovanni]: Add "CRS=EPSG:4326" in the request when Giovanni acts as a client or always require the KVP when acting as a server.

Initial comparisons between Juelich, DataFed, and Giovanni’s GetCoverage reveal that while DataFed and Giovanni use WCS VERSION 1.0.0, the Juelich server utilizes 1.1.0. Furthermore, the most obvious result of our comparison is that the Juelich server does not return a NetCDF file. Instead, a small xml envelope is returned, which contains metadata and a pointer to the result. This occurs because Juelich’s server currently only supports a value of “true” for the STORE parameter. Juelich has noted that in the future “store=false” may be required to return a MIME multipart message with both XML and the NetCDF included, from which the client could extract the NetCDF file from the message. To accommodate the current configuration, Giovanni may opt to simply set “store=true” in the GetCoverage call. Additionally, a WCS version checking will become mandatory to ensure backward compatibility.

Action Item [Giovanni]: Place “store=true” in GetCoverage call.

Action Item [Giovanni]: Have a WCS version checking mechanism.

Michael Decker, of Juelich, acknowledges the need to rectify the SERVICE parameter being ignored in GetCoverage.

Action Item [Juelich/DataFed]: Include SERVICE parameter in GetCoverage.

The CRS value of EPSG:4326 is utilized by all three parties. However, the Juelich server follows the 1.1.0 convention and appends the EPSG to the end of BoundingBox value.

A difference in the parameter naming convention between WCS 1.0.0 and 1.1.0 is BBOX/BoundingBox. A discrepancy with respect to longitudes was noticed while probing a NetCDF file that was obtained by accessing the URL that contains the link to a Juelich test file. As recommended for the “HTAP_monthly” test dataset, “BoundingBox” was set to “-180,-90,180,90”. However the file revealed that only longitudes of 0 through 180 were embedded in the file. In order to obtain all longitudes, the start and end values have to be set to 0 and 360. This is because a majority of Juelich files are on a 0 to 360 degree grid. DataFed states that plans are underway to support both longitude styles of 0 to 360 and -180 to 180 regardless of how the data is stored. The CRS specifications for the Juelich server will need to be modified in order to be consistent with their longitude value range.

Action Item [Juelich/DataFed]: The CRS specifications need to be modified to correctly set the longitude value range. Currently, the BoundingBox value contains WGS84 in the urn, which implies that the longitude range is from -180 to 180 (see EPSG code 1262 for detailed information). There are two alternatives to correct this: 1) use a CRS UNR that define the longitude range being [0,360]; or 2) change the server to advertise, to get client requests, and to label the output using the [-180,+180] longitude range. Option (2) is perhaps the easy way to go. If (2) is used, the server doesn't have to rotate the original data array but it must return the output coverage using the [-180,+180] range

Both Juelich and DataFed use the dataset as the WCS server name for the COVERAGE/identifier parameter value. Both Hualan and Wenli suggest that Giovanni WCS either use a look-up table or specify how to link the coverage name used in WCS and the actual product or data files in the archive. The result file naming convention appears to be the same for the original HTAP files that exist in Giovanni’s local directory and those returned from the Juelich server. The following format was verified by Michael Decker: ${model}_${scenario}_${filetype}_${year}.

Action Item [Giovanni]: Giovanni WCS either use a look-up table or specify how to link the coverage name used in WCS and the actual product or data files in the archive.

One of the differences between WCS 1.0.0 and 1.1.1 is the TIME(1.0.0)/TimeSequence(1.1.0) parameter. While DataFed uses a single value, Juelich specifies a range. In our sample, the time format is ${yyyy-mm-dd/yyyy-mm-dd}. Michael Decker added that TimeSequence may also be specified by a single point in time, a list of points in time separated with a “,”, or time ranges(with the option to specify periodicity). He further states that TimeSequence follows ISO8601. It is noted that in the original files that Giovanni has locally and in the files returned via WCS from the Juelich server that all contain one time valid for each month. This was validated with testing. The tests revealed that whether the start and end times spanned a month or several days (with or without a specified periodicity) the output is valid for a single day, which implies that interpolation is occurring. The output time stamp is valid for the day interpolated to instead of the for the requested time period. Furthermore, a single year (ex. TimeSequence=2001-01 and 2001) returns an error stating that a period must be used. If coverage data is a yearly value, it should use ${yyyy}. If it is a monthly value, it should use ${yyyy-mm}. Similarly, daily products should be advertised in ${yyyy-mm-dd} format. Clarification is required in the current specification. The complexity of time definition and lack of documentation in the current specification causes a time discrepancy. Thus a change request to the specification is necessary.

Action Item [Juelich/DataFed]: Clarification (definition and documentation) is required in the current TimeSequence specification.

The “Minimum Set WCS Standards” document notes the need to resolve case sensitivity in the value for the FORMAT parameter. Giovanni currently supports “format=HDF” and “format=NetCDF”. Juelich supports “image/netcdf”. Although it is not necessary, Giovanni would like Juelich to change to “format=image/NetCDF”. Michael Decker stated that this should not be a problem but that DataFed needs to be contacted regarding this since they are responsible for the installation.

Optional Item [Juelich/DataFed]: Although not significant, Giovanni asks for Juelich server to change “format=image/netcdf” to “format=NetCDF”.

The STORE parameter is used only by Juelich. Since Juelich is using WCS 1.1.0 and only supports “store=true”, Giovanni will need to implement an extra step to parse the URL link to the NetCDF file from the XML returned from the GetCoverage call.

Action Item [Giovanni]: Implement a step to parse out the URL link to the NetCDF file from the XML returned from the GetCoverage call.

The RangeSubset parameter is utilized only by Juelich because WCS 1.1.0 allows multiple range fields for multiple variables (each may require their own interpolation method). RangeSubset is not allowed in v1.0.0 (individual variables only). The RangeSubset parameter is utilized to specify which variable(s) the user is interested in. If RangeSubset is not provided then all variables in the file are returned. The same is true if “RangeSubset=*”. Variable names that are requested are separated by “;”.

Note that data slicing is available for subsetting latitudes/longitudes, time, and for obtaining variable(s), but is not available for vertical levels. Additionally, the data is specified on sigma levels. Juelich will need to pre-process the data in order to interpolate this vertical data from sigma levels to pressure levels. Additional data improvements, such as setting appropriate fill (missing) values (for specific models) and how to resolve surface levels when they are beneath the physical surface after interpolation, are needed in pre-processing.

Action Item [Giovanni]: Provide Juelich with email describing the data improvements necessary for pre-processing.

Action Item [Giovanni]: Document (model by model) the information pertaining to the differences between various models data on the ESIP wiki page.

Action Item [Juelich/DataFed]: Pre-process the HTAP model output data to: a) interpolate from sigma levels to pressure, b) set correct fill values, c) rectify under-the-surface cases.

Optional Item [Juelich/DataFed]: If upgrade to WCS 1.1.2, allow for vertical slicing.

Team members propose that Giovanni provide another independent version 1.1.2 server. If this occurs then there is no backward compatibility issue, which minimizes the impact to existing users (most of the work will be done in DescribeCoverage).

Optional Item [Giovanni]: Provide another independent version WCS 1.1.2.

HTAP model comparison spread sheet: October 21, 2009 Report by Giovanni

Giovanni is re-posting the HtapModelComparison.xls spreadsheet which was created to better understand the differences and similarities between the HTAP models. These differences complicate model pre-processing from sigma to uniform pressure levels. The spreadsheet highlights the differences between the HTAP models with respect to vertical levels, time, zero fields, and missing values. We are looking for an ongoing discussion on how to understand these models better. Please note if you see something that is incorrect.Media:HtapModelComparison.xls