Sensor Data Management Middleware

back to EnviroSensing Cluster main page

Overview

Middleware are software packages and procedures that reside virtually between data collectors, such as automated sensors, and data ‘consumers’, such as data repositories, websites, or other software applications. Middleware can be used to perform tasks such as streaming data from data loggers to servers, archiving data, analyzing data, or generating visualizations.

Many middleware packages are available for developing a comprehensive, reliable, and cost-effective environmental information management system. Each middleware option can have a unique set of requirements or capabilities, and costs can vary widely. A single middleware package may be used if it includes all of the user requirements, or multiple middleware may be bundled into a data management system if they are compatible or interoperable with each other and the rest of the data collection and management system.

This section describes multiple middleware packages that are currently available, and provides examples of how different software and procedures are being used to collect, analyze, visualize, and disseminate sensor-supplied environmental data.

Introduction

There are multiple factors that may affect the choice, use, and performance of middleware. These factors may be classified according to a group’s research agenda, technological requirements, and personnel skill sets.

Research Agenda

The research agenda of a group is a major determinant of the type of middleware system needed. A group focused on only one or a few narrowly focused research questions may need fewer types of sensors and consequently, fewer software modules may be adequate to streamline data processing from collection to the end goal. A team that investigates multiple questions spanning multiple research domains is likely to use more diverse and/or larger sets of sensors. There may not be a single middleware package that can meet all of the needs of a research group. In this case, multiple packages will need to be linked into a workflow.

Technological Requirements

The technological requirements of a research program may vary from simple to complex. If the research can be done with sensors from a single, well-managed company, the proprietary software packaged with the purchased sensor network may be adequate for at least a major portion of the information management system. For example, for Campbell Scientific dataloggers, their “LoggerNet” software integrates communication, data download, display and graphics functions. However, some dataloggers and sensors (particularly innovative ones, custom-built), may need custom-written software. It is important to plan time and budgets for required software upgrades, licensing, additional packages, support, and maintenance. Systems that cost less in the outset may not always be cheaper over the long run. It is also important to consider how to best meet infrastructure and bandwidth requirements, while deploying middleware on a variety of servers or laptop computers in the field or lab setting. Depending on the data and hardware infrastructure characteristics, each middleware option can introduce benefits or drawbacks to the overall system functionality.

Personnel Skills

Another key factor to consider is the skill set of the personnel. A complex data management system may require multiple people, each with a unique skill set such as database design, system architecture, web programming, etc. It is important to correctly identify each person’s skill set and role in data management tasks. It may also be necessary to plan for additional hires or job-training to addresses various scenarios and solutions, to identify appropriate salaries, and to budget enough time for software development and system administration. More details about the personnel roles and skills can be found in the “Roles and required skill sets“ section.

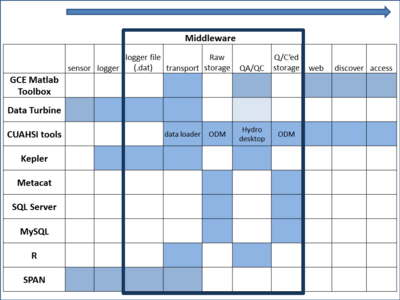

Middleware Classifications

Classification by functionality

Middleware can be classified with respect to the functionality they provide, such as:

- Controlling instrumentation and data collection: Modules may be used to control sampling intervals, manage the event-triggered (burst) or continuous sampling regimes, communicate and transfer data between the instrumentation and other system components.

- Data monitoring, processing, and analysis: Modules may provide alarm management, perform automated QA/QC on data streams, or run derivative calculations including averages, aggregation and accumulation, data shifting and transformation, filtering of time series records with respect to the dates, value range, location, station/variable type, or other criteria.

- Export and publishing of data: Modules may provide functionality to export sensor data to different formats (e.g., ASCII, binary, or xml), different archives, make data discoverable through geospatial catalogues, or publish the data through web services.

- Data visualization: Modules may provide visualization (e.g., tables, graphs, sonograms) of geospatial and/or time series data from sensor arrays or workflow structures.

- Documentation: Modules may be used to document field events through paperless collection of field data, integrate sensor data and documentation (see sensor tracking & documentation section), or handle sensor calibration records.

- Other supported functionality: Modules may be used to provide access to external data (e.g., ODBC, JDBC, OLE DB), to connect or chain other middleware components, or to implement mobile applications.

Classification by propriety and type

Middleware can also be classified by software proprietary rights and whether they are considered applications or platforms. Accordingly, we can identify different groups of middleware:

- Proprietary data management applications and platforms

- Proprietary research applications

- Limited open source applications (free packages that can be used with proprietary solutions)

- Open source data management applications and platforms

- Open source research applications and programming languages

Some of the applications and platforms listed above are often identified as a software of choice for many different organizations. More details about each of these components are provided in the next section of this document.

Best Practices

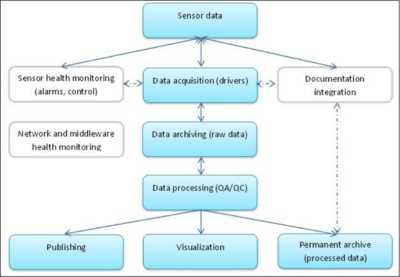

Choosing the middleware components that will best fit the tasks and work environment can be challenging. In addition to the personnel roles and skills, budget, and infrastructure considerations already discussed in the Introduction and other chapters of this best practices guide, it is important to be aware of the whole sensor management process in order to identify the suitable middleware components. In some cases, a proprietary middleware software will required as part of the information management system if the instrumentation only outputs data in a proprietary format. In other cases, multiple open source software packages may be suitable for chaining into a comprehensive system that manages data from collection to final archiving and sharing.

Some steps in selecting middleware are:

- Identify your objectives. What do you want the middleware to do?

- Assemble a list of candidate software.

- Rate the candidates based on capabilities, cost (keeping in mind that a simple-to-use but expensive package may cut costs in the long-term), stability, and ease of use with respect to the personnel skills available on your team.

- If no single software product can meet all the objectives, test to see how well different candidate software integrate with one another to perform the needed functions.

During this planning stage, consider the following recommendations:

- Identify workflow components and describe their functional requirements from the instrumentation to the archive level of organization (see Figure 2). Some components can be optional or part of the more complex solutions.

- Plan for robust execution and choose software and hardware components that can handle the loss of connectivity, power, or other failures related to harsh environmental or operational conditions.

- Choose reusable/sharable components.

- Keep field deployment of middleware as simple as possible (keep out of field if possible).

- Use as few middleware components as possible based on research group requirements.

- Document and diagram the entire workflow and update as needed.

Case Studies

We present several real world case studies in that vary widely in the types of ecosystems that sensors are deployed in and in complexity of the information management system. Some case studies include proprietary software only, some include free or open-source software, and some include both.

Marmot Creek Research Site, Rocky Mountains, Canada

Introduction Marmot Creek research site is located on the eastern slopes of Rocky Mountains in Alberta, Canada. The site is dominated by the needle leaf vegetation and poorly developed mountain soils. Precipitation, snow depth, soil moisture, soil temperature, short and longwave radiation, air temperature, humidity, wind speed, and turbulent fluxes of heat and water vapour data sets are collected and used for the hydrological modelling of the Marmot Creek Basin. Time series records are obtained at Hay Meadow, Upper Clearing , Vista View, Fisera Ridge, and Centennial Ridge hydro-meteorological stations equipped with different sensor configurations and Campbell Scientific data loggers.

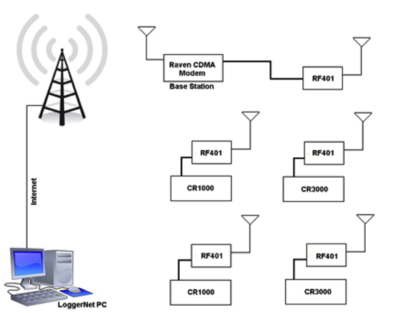

Communication equipment and methods The telemetry network consists of one Raven CDMA cellular modem and RF401 spread spectrum radio modem located at the Upper Clearing base station, four additional RF401 modems located at each of the Meteorological stations serviced by telemetry, and the desktop computer located at the University of Saskatchewan. The radios connected to the data loggers at each of the meteorological stations talk to the base station on an ongoing basis. All of the data loggers and RF401 radios have PacBus addresses and they operate as PacBus Nodes. Also, data loggers are set to operate as routers enabling routing inside this network through the various paths. The telemetry network configuration is presented in Figure 3.

Data collection and processing At the intervals prescribed within the LoggerNet application running on a desktop computer, data is collected from the meteorological stations. The Raven CDMA transfers data utilizing a dynamic IP address and its static alias associated through the Airlink IPmanager software. The unique PakBus address is assigned to each of the dataloggers in this telemetry network. In most cases, logger data files at the off-site location will be appended on a daily, four-hourly and hourly basis. In addition to the scheduled intervals, field data can be downloaded on demand through the LoggerNet application.

LoggerNet “Task Master” utility is used to execute custom programs after each successful collection of the field data. Also, the utility can be used to start scheduled executions of different programs and operations. For Marmot Creek records, Task Master is used to rename the collected data logger files and upload them to the FTP server.

Data publishing Field data downloaded to the off-site computer are accessed by the RTMCPRO LoggerNet utility. Last measured values are mapped to the specified locations on a web page. The web server hosts different RTMC files for daily summary information, station data tables, alarms, and other records. The main interface contains individual windows for the main screen web page as well as the screens for individual stations, weekly data graphs, and site information. RTMC files interface with the web page via the RTMC Web Server desktop utility.

Reference Centre for Hydrology, University of Saskatchewan. University of Saskatchewan Hydrology Field Data Retrieval and Management Manual. 2009. PDF file.

University of Texas at El Paso's System Ecology Lab, Jornada research site, NM

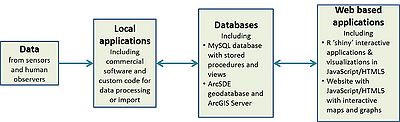

Middleware used: Hobolink, MySQL, R, ArcGIS, HTML5/Javascript website

Introduction The Systems Ecology Lab (SEL) at the University of Texas, El Paso studies patterns and controls of land-atmospheric water, energy, and carbon fluxes in both arctic and desert biomes. At the USDA-ARS Jornada Experimental Range in southern New Mexico, SEL’s research site collects data using >100 automated sensors (made by Campbell, Onset, Decagon, PPSystems, and others), and manual field observations. Sensors are mounted on an eddy covariance tower, eight connected mini-towers (which together form a wireless sensor network), and a cart mounted on a 110m long tramline. >4 GB of data is collected per week from micromet, hyperspectral, and gas flux sensors, as well as cameras (detect changes in phenology). This research site is also used to help develop and test new cyberinfrastructure and information management concepts and tools. For this case study, we focus solely on measurements made by the 8-node wireless sensor network.

Data Collection and Processing The 8-node wireless sensor network is composed exclusively of Onset’s Hobo data loggers (8) and sensors (62). Each data logger is powered by its own solar panel. Sensors measure precipitation, leaf wetness, PAR, solar radiation, and soil moisture. Data are relayed to the Jornada Headquarters and sent to Onset. The data are available for visualization and downloading via Hobolink (http://www.hobolink.com). The online service allows users to set up alerts for system malfunctions and automated reporting of data. Our team developed a database schema (using MySQL for implementation) that uses a core common concept among all datasets - a measurement on a focal entity by an observer at a specific location and time - to organize data. Around this core, there are other tables to store metadata such as project information, maintenance records, and related files. The wireless sensor network is imported into the database via custom SQL scripts. Within the database, scheduled queries can do basic data quality-checking and flagging, and generate tables of summarized data (e.g., daily means) that can be accessed shortly after the raw data were imported.

Data Publishing Our team wrote web services in Python to query the summarized data and deliver the results (in JSON format) to a JavaScript/HTML5 website in which AmCharts’ JavaScript Charts library (http://www.amcharts.com/; free for non-commercial use) are used to plot the data and generate reports including the data and pertinent metadata. This website also renders a map of the site and sensors via an ESRI ArcSDE geodatabase and ArcGIS Server.

Middleware Package Descriptions

In this section, several middleware packages are described as a basic introduction to the packages. No specific endorsement or criticism is implied, and it is important to keep in mind that software packages are often revised.

- Aquatic Informatics Aquarius: AQUARIUS is a software for water time series data management that provides functionality to correct and quality control time series data, build rating curves, transform and visualize the hydrological data, as well as to publish the field data in real-time. To accomplish these tasks, AQUARIUS uses three main components. Data Acquisition System enables accessing the real time data from the field instrumentation either as the extension to the EnviroSCADA or through the hot folders. Aquarius Server is a web controlled data management platform that enables centralized access to the database stored data sets. Also, the server is used to publish the data either through the public web portal or as a Representational State Transfer (REST) web service that supports the WaterML representation of the data. Finally, AQUARIUS Workstation provides a set of data processing tools to import and process the data, create rating curves, and apply QA/QC procedures to the time series records.

- Campbell Scientific LoggerNet: LoggerNet is the main Campbell Scientific software application used to set up, operate, and manage a sensor network that uses Campbell Scientific equipment. LoggerNet uses serial ports, telephony drivers, and Ethernet hardware to communicate with data loggers via cellular and phone modems, RF devices, and other peripherals. LoggerNet also includes a suite of tools such as text editors for creating Campbell Scientific datalogger programs, and methods for real-time monitoring, automated data retrieval, data post-processing, data visualization and monitoring of retrieved information, and data publishing options. More advanced features, such as export to to MySQL or SQL Server databases, are also offered through additional LoggerNet applications not included in the standard version (LoggerNet Database, LoggerNet Admin, LoggerNet Remote etc.).

- CUAHSI HIS: The Consortium of Universities for the Advancement of Hydrologic Science, Inc.'s Hydrologic Information System (CUAHSI HIS) is an advanced web service based system created to share the hydrologic data. The system is comprised of hydrologic databases running the CUAHSI Observations Data Model (ODM) and servers hosted by different organizations that are connected through the web services. Centralized HIS modules are used for data publication, access, and discovery, while local (and central) modules provide tools for data analysis and visualization. Overall, CUAHSI HIS is used to store the observation data in a relational data model (ODM), access the data through internet-based Water Data Services that publish the observations and metadata using a consistent Water Markup Language (WaterML), index the data through a National Water Metadata Catalog, and provide a discovery of data through a map and keyword search system.

- CUAHSI HIS components

- CUAHSI HIS list of publications

- Development of a Community Hydrologic Information System

- Data Turbine Initiative: DataTurbine is a real time streaming data engine that acts as a black box to which data providers (sources) send data and consumers (sinks) receive data from. DataTurbine is implemented as a multi-tier java application with servers accepting and serving up the data, sources loading the data onto the servers, and sinks pulling the data for visualization and analysis purposes. Each of these components can be located on the same machine or different computers and can communicate with each other over the internet. Data is heterogeneous and the sinks could access any type of data seamlessly. While new data is loaded to the server(s), old data is being erased in order to free the receiving buffers.

- GCE Data Toolbox: The Georgia Coastal Ecosystems (GCE) Data Toolbox is a software library for metadata-based processing, quality control, and analysis of environmental data. It is designed and maintained by Wade M. Sheldon, Jr. of the Georgia Coastal Ecosystems LTER and is available free of charge, but does require a MATLAB license. The Toolbox can be used for a wide variety of environmental data management tasks such as: importing raw data from environmental sensors for post-processing and analysis; performing quality control analysis using rule-based and interactive flagging tools; gap-filling and correcting data using gated interpolation, drift correction and custom algorithms/models; visualizing data using frequency histograms, line/scatter plots and map plots; summarizing and re-sampling data sets using aggregation, binning, and date/time scaling tools; synthesizing data by combining multiple data sets using join and merge tools; mining near-real-time or historic data from the USGS NWIS, NOAA NCDC, NOAA HADS or LTER ClimDB servers; harvesting and integrating channel data from Data Turbine servers. This software is highly modular and can be used as a complete, lightweight solution for environmental data and metadata management, or in conjunction with other cyber infrastructure. For example, newly acquired data can be retrieved from a Data Turbine or Campbell LoggerNet Database servers for quality control and processing, then transformed to CUAHSI Observations Data Model format and uploaded to a HydroServer for distribution through the CUAHSI Hydrologic Information System.

- Kisters WISKI: WISKI software package is a tool for hydrological data management. WISKI is a Windows based client/server system hosted through the MS SQL or Oracle databases. The software combines data management features with tools to collect, store, analyze, visualize, and publish the observation data. Typical data input sources are remote data collected from the field data loggers, data imported from third parties via input files in different formats, records obtained from digitization of graphical charts, or manual inputs. Main WISKI module incorporates the data management functionality as well as the discharge and rating curve tools that work closely with other Kisters software components including KiWQM (water quality), KiWIS (data publishing through web services), SODA (telemetry hardware module for remote data collection), KiDSM (task scheduler), Modeling apps (Link-and-Node and statistical forecast), ArcGIS extensions, Web Public and Web Pro (web server publishing applications).

- NexSens iChart: NexSens iChart is a Windows-based data acquisition package designed for environmental monitoring applications. iChart supports interfacing both locally (direct connect) and remotely (through telemetry) with many popular environmental products such as YSI, OTT, and ISCO sensors. Additionally it can interface with a NexSens iSIC and submersible data loggers. The software simplifies and automates many of the tasks associated with acquiring, processing, and publishing environmental data.

- [http://nexsens.com/pdf/nexsens_wqdata_spec.pdf NexSens WQData and iChart software overview

- iChart software product spotlight (Lake Scientist)

- NexSens data website (Bucknell University)

- iChart quick start guide

- Onset Hobolink and Hoboware: Onset has two main software applications to support its Hobo data loggers and sensors.. Hobolink is an online services that provides 5-minute data from its data loggers, multiple graphs of data streams, customizeable interface, settings for automated alerts for sensor malfunction, and customizeable data reporting features. Hoboware is a downloadable package that provides more functionality, such as line charts for more than one data stream, charting types that are unavailable in Hobolink, etc.

- HOBOware Pro vs. HOBOware Lite List of features

- HOBOware® User’s Guide (Data visualization and analysis)

- HOBOlink® User’s Guide (Data access and control of HOBO devices)

- Vista Data Vision: VDV is a data management system with tools to store and organize data collected from a variety of data logger “dat” files. The software offers different visualization, alarming, reporting, and web publishing features. Data logger files are parsed, imported, and stored into the MySQL relational database from where the data can be custom queried and exported or published on a web server. Numerous access control options are available so VDV users can have customized access to specific station or sensor data.

- Vista Data Vision brochures and manuals

- Vista Data Vision version comparison

- Vista Data Vision Review (LTER)

- YSI EcoNet: EcoNet software works with YSI monitoring instrumentation. The software offers delivery of data from the field directly to the YSI web server. No desktop applications are used and all data are stored on the remote YSI computer. System users can access visualization, reports, alarms, and email notification tools directly on the YSI server.

The following tables describe features of middleware packages known to the authors of the wiki. These tables do not imply endorsement or criticism of any given product, and may reflect older versions of products than currently exist.

- Basic: The software has built-in but basic features compared to the overall market.

- Standard: The software has built-in features that are standard with comparison to the overall market.

- Advanced: The software has built in advanced features compared to the overall market.

- Custom: The software doesn't have built-in features, but a programmer can develop them.

- None: The software doesn't have the feature, and it cannot be custom-developed.

- Has: The software has built in features, but the level compared with the overall market is unknown currently.

- Unknown: The capacity of the software is unknown.

Table 1. Middleware basic features: licensing, cost, input and export data formats, and required level of programming expertise.

| Program | Licensing | Cost | Input data format | Export data format | Needed programming expertise |

|---|---|---|---|---|---|

| Antelope Orb | Proprietary | Pay | ASCII, Binary | ASCII, Binary | Advanced |

| Aquarius | Proprietary | Pay | Advanced | ||

| ArcGIS | Proprietary | Pay | ASCII, shapefiles | ASCII, shapefiles | Advanced |

| B3 | Open source | Free | ASCII | ASCII | None to Basic |

| BigSense and LtSense | Open source | Free | Binary | CSV, JSON, TXT, XML | Advanced |

| Cosm | |||||

| CUAHSI HIS | Open source | Free | ASCII | XML, WaterML | Standard |

| DataTurbine | Open source | Free | ASCII, Binary | ASCII, Binary | Advanced |

| EddyPro | Proprietary | Pay | Binary | ASCII, Binary | Standard |

| GCE Toolbox | MATLAB is proprietary, Toolbox is open source | MATLAB is pay, Toolbox is free | ASCII, Binary, database | ASCII, Binary, .mat, database | Toolbox is Standard, MATLAB is Advanced |

| Hobolink (Onset) | Proprietary | Free | Proprietary | ASCII, Proprietary | None |

| Hoboware (Onset) | Proprietary | Pay | Proprietary | ASCII, Proprietary | None |

| Kepler | Open source | Free | ASCII, Binary | ASCII, Binary | Basic to Advanced |

| Lake Analyzer | Proprietary/Open source | Free | ASCII | ASCII | Basic |

| LoggerNet (Campbell) | Proprietary | Pay | Proprietary | ASCII, database | Standard |

| Nexsen's Technology | Proprietary | Pay | Unknown | Unknown | Unknown |

| Pandas | Python is Free, Pandas is Free and Open Source | Binary, encoded, np.array, database, markup | Binary, encoded, np.array, database , markup | Advanced and Custom | |

| Pegasus | Unknown | Unknown | Unknown | Unknown | Unknown |

| R | Open source | Free | ASCII, Binary, database | ASCII, Binary, database | Standard to Advanced |

| SAS | Proprietary | Pay | ASCII, Binary, database | ASCII, Binary, database | Standard to Advanced |

| Taverna | Open source | Free | Unknown | Unknown | Standard to Advanced |

| Vista Data Vision | Proprietary | Pay | ASCII | ASCII | Unknown |

| VizTrails | Open source | Free | ASCII | ASCII | Basic to Advanced |

| WaterML support | Unknown | Unknown | Unknown | Unknown | Unknown |

| WISKI | Proprietary | Pay | ASCII | ASCII | Advanced |

| YSI EcoNet | Proprietary | Pay | Unknown | Unknown | Unknown |

Table 2. Middleware data handling features: hardware communication, ability to do quality assurance and control (QA/QC), ability to stream data to archives, data visualization, data transformation and analysis, and ability to generate custom SQL queries or other scripting.

| Program | Hardware communication | QA/QC capacity | Capacity to stream to archive | Data transformation and analysis | Data visualization | Custom SQL queries/Scripting |

|---|---|---|---|---|---|---|

| Antelope Orb | Custom | Custom | Custom | Custom | Custom | Custom |

| Aquarius | Has | Advanced | Advanced | Advanced | Advanced | Advanced |

| ArcGIS | Unknown | Advanced | Unknown | Advanced | Advanced | Standard to Advanced |

| B3 | None | Advanced | None | Has | Has | None |

| BigSense and LtSense | Custom | Custom | Has | Has | Unknown | Unknown |

| Cosm | Unknown | Unknown | Unknown | Unknown | Unknown | Unknown |

| CUAHSI HIS | Custom | Advanced | Advanced (ODM) | Advanced (HydroDesktop, ODM Tools, TSA) | Advanced (HydroServer TSA, HydroDesktop, external programs) | Advanced (HydroDesktop, ODM Tools) |

| DataTurbine | Custom | Custom | Custom | Basic (NEES RDV) | Basic (NEES RDV) | Has |

| DataFrames.jl | Through C and Python libs | Has, stats.jl, numpy | Has through code.native | Has through Gadfly, Matplotlib, D3, or Winston | Has | Has |

| EddyPro | Unknown | Has | Unknown | Has | Has | Unknown |

| GCE Matlab | Custom | Advanced | Standard to Advanced | Advanced (with Matlab) | Advanced | Advanced |

| Hobolink (Onset) | Basic | None | Basic | Standard | None | None |

| Hoboware (Onset) | Advanced | Has | Unknown | Advanced | Standard | None |

| Kepler | Custom | Custom | Custom | Custom | Custom | Custom |

| Lake Analyzer | None | Basic | None | Has | Has | None |

| LoggerNet (Campbell) | Advanced | Basic | Basic | Basic | None | None |

| Nexsen's Technology | Has | None | Basic | Basic | None | None |

| Pegasus | Unknown | Unknown | Unknown | Unknown | Unknown | Unknown |

| R | Custom with open-source tech | Custom | Custom | Advanced/Custom | Advanced/Custom | Custom |

| SAS | None | Custom | Custom | Advanced | Advanced | Custom |

| Taverna | Unknown | Custom | Custom | Custom | Custom | Custom |

| Vista Data Vision | None | Basic | Standard | Standard | Standard | Basic |

| VizTrails | Unknown | Custom | Unknown | Custom | Custom | Custom |

| WaterML support | Unknown | Unknown | Unknown | Unknown | Unknown | Unknown |

| WISKI | Has | Advanced | Advanced | Advanced | Advanced | Advanced |

| YSI EcoNet | Has | None | Basic | Basic | None | None |

Table 3. Middleware other features: Task automation, capacity for multi-tier architecture, website publishing, streaming through web services, support for modeling.

| Program | Task automation | Multi-tier architecture | Website publishing | Streaming through web service | Support for modeling |

|---|---|---|---|---|---|

| Antelope Orb | Has | Standard | Custom | Custom | Unknown |

| Aquarius | None | Advanced | Advanced | Unknown | Unknown |

| ArcGIS | Advanced | Unknown | Advanced | Advanced | Advanced |

| B3 | Unknown | None | None | None | Has |

| BigSense and LtSense | Has | Has | Has (via RESTful services) | Has | Unknown |

| Cosm | Unknown | Unknown | Unknown | Unknown | Unknown |

| CUAHSI HIS | Advanced (ODM SDL) | Advanced | Advanced (HydroServer, Website, HydroSeek) | Advanced | Has (through external programs) |

| DataTurbine | Has | Standard | Basic | Standard | None |

| EddyPro | Has | Unknown | Unknown | Unknown | Unknown |

| GCE Matlab | Advanced | None | Advanced | None | Advanced (with Matlab) |

| Hobolink (Onset) | Basic | None | Advanced | Has | None |

| Hoboware (Onset) | Basic | None | None | None | None |

| Kepler | Custom | Unknown | Custom | Custom | Advanced/Custom |

| Lake Analyzer | Custom (Matlab) | None | None | None | Basic (link to GLM model) |

| LoggerNet (Campbell) | Standard | Basic | Basic | None | None |

| Nexsen's Technology | Basic | Basic | Basic | None | None |

| Pegasus | Unknown | Unknown | Unknown | Unknown | Unknown |

| R | Custom | None | Custom (shiny package) | Custom | Advanced/Custom |

| SAS | Custom | None | Custom | Custom | Advanced/Custom |

| Taverna | Custom | None | Unknown | Custom | Advanced/Custom |

| Vista Data Vision | None | Standard | Advanced | None | None |

| VizTrails | Custom | None | Custom | Custom | Advanced/Custom |

| WaterML support | Unknown | Unknown | Unknown | Unknown | Unknown |

| WISKI | Advanced | Advanced | Advanced | Advanced | Has |

| YSI EcoNet | Basic | None | basic | None | None |

References

“OGC WaterML Standard Recommended for Adoption as Joint WMO/ISO Standard.” Open Geospatial Consortium, 10 Dec. 2012. Web. 14 May 2013.