Interoperability and Technology/Tech Dive Webinar Series

Past Tech Dive Webinars (2015-2022)

11 May 2023: "Software Procurement Has Failed Us Completely, But No More!"

The way we buy custom software is terrible for everybody involved, and has become a major obstacle to agencies achieving their missions. There are solutions, if we would just use them! By combining the standard practices of user research, Agile software development, open source, modular procurement, and time & materials contracts, we can make procurement once again serve the needs of government.

Slides available here: File:2023-05-Jaquith.pdf

Recording:

Minutes:

Recognizing that software procurement is one of the primary ways that Software / IT systems advance, Waldo went into trying to understand that space as a software developer.

Healthcare.gov

Contract given to CGI Federal for $93M – cost ~1.7B by launch.

Low single digits numbers of people actually made it through the system.

Senior leaders were given the impression that things were in good shape.

The developers working on the site knew it wasn't going to work out (per IG report).

- strategic misrepresentation – things are represented as more rosy as you go up the chain of command

On launch, things went very badly and the recovery was actually quite quick and positive.

Waldo recommends reading the IG report on healthcare.gov.

This article:

("The OIG Report Analyzing Healthcare.gov's Launch: What's There And What's Not", Health Affairs Blog, February 24, 2016. https://dx.doi.org/10.1377/hblog20160224.053370) provides a path to the IG report:

(HealthCare.gov - CMS Management of the Federal Marketplace: An OIG Case Study (OEI-06-14-00350), https://oig.hhs.gov/oei/reports/oei-06-14-00350.pdf)

and additional perspective.

Rhode Island Unified Health Infrastructure

($364M to DeLoitte) "Big Bang" deployment – they let people running old systems go on the day of the new system launch. They "outsourced" a mission critical function to a contractor.

We don't tend to hear about relatively smaller projects because they are less likely to fail and garner less attention.

Outsourcing as started in the ~90s was one thing when the outsourcing was for internal agency software. It's different when the systems are actually public interfaces to stakeholders or are otherwise mission critical.

It's common for software to meet contract requirements but NOT meet end user needs.

Requirements complexity is fractal. There is no complete / comprehensive set of requirements.

… federal contractors interpreting requirements as children trying to resist getting out the door ...

There is little to no potential to update or improve requirements due to contract structure.

Demos not memos!

Memorable statements:

- Outsourced ability to accomplish agency’s mission

- Load bearing software systems on which the agency depends to complete their mission.

- Mission of many agencies is mediated by technology.

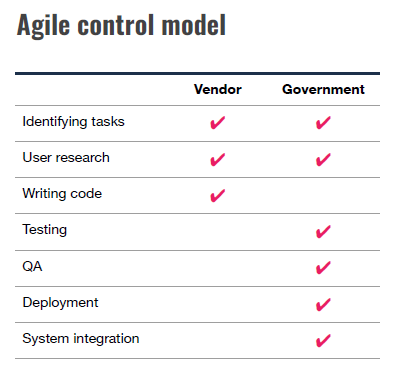

But no more! – approach developed by 18F

System of six parts –

1. User-centered design

2. Agile software development

3. Product ownership

4. DevOps

5. Building out of loosely coupled parts

6. Modular contracting

"You don't know what people need till you talk to them."

Basic premise of agile is good. Focus is on finished software being developed every two weeks.

Constantly delivering a usable product... e.g. A skateboard is more usable than a car part.

Key roles for government staff around operations are too often overlooked.

Product team needs to include an Agency Product Owner. Allows government representation in software development iteration.

Build out of loosely coupled / interchangeable components. Allows you to do smaller things and form big coherent systems that can evolve.

Modular contracts allow big projects that are delivered through many small task orders or contracts. The contract document is kind of a fill in the blank template and doesn't have to be hard.

13 April 2023: "Evolution of open source geospatial python."

Free and Open Source Software in the Geospatial ecosystems (i.e. FOSS4G) play a key role in geospatial systems and services. Python has become the lingua franca for scientific and geospatial software and tooling. This rant and rave will provide an overview of the evolution of FOSS4G and Python, focusing on popular projects in support of Open Standards.

Slides: https://geopython.github.io/presentation

Recording:

Minutes:

Mapserver has been around for 23 years!

Why Python for Geospatial? Ubiquity Cross OS compatible Legible and easy to understand what it's doing Support ecosystem is strong (PyPI, etc.) Balance of performance and ease of implementation Python: fast enough, and fast in human time -- more intensive workloads can glue to C/C++

The new generation of OGC services – based on JSON, so the API interoperates with client environments / objects at a much more direct level.

The geopython ecosystem has a number of low level components that are used across multiple projects.

pygeoapi is an OGC API reference implementation and an OSGeo project. E.g. https://github.com/developmentseed/geojson-pydantic

pygeoapi implements OGC API - Environmental Data Retrieval (EDR) https://ogcapi.ogc.org/edr/overview.html

pygeoapi has a plugin architecture. https://pygeoapi.io/ https://code.usgs.gov/wma/nhgf/pygeoapi-plugin-cookiecutter

pycsw is an OGC CSW and OGC API - Records implementation. Works with pygeometa for metadata creation and maintenance. https://geopython.github.io/pygeometa/

There's a real trade off to "the shiny object" vs the long term sustainability of an approach. Geopython has generally erred on the side of "does it work in a virtualenv out of the box".

How does pycsw work with STAC and other catalog APIs? pycsw can convert between various representations of the same basic metadata resource.

"That's a pattern… People can implement things the way they want."

Chat Highlights:

- You can also write a C program that is slower than Python if you aren't careful =).

- https://www.ogc.org/standards/ has lots of useful details

- For anyone interested in geojson API development in Python, I just recently came across this https://github.com/developmentseed/geojson-pydantic

- OGC API - Environmental Data Retrieval (EDR) https://ogcapi.ogc.org/edr/overview.html

- Our team has a pygeoapi plugin cookiecutter that we are hopeful others can get some mileage out of. https://code.usgs.gov/wma/nhgf/pygeoapi-plugin-cookiecutter

- I'm going to post this here and run: https://twitter.com/GdalOrg/status/1613589544737148944

- 100% agreed. That's unfortunate, but PyPI is not designed to deal with binary wheels of beasts like me which depend of ~ 80 direct or indirect other native libraries. Best solution or least worst solution depending on each one's view is "conda install -c conda-forge gdal"

- General question here - you mentioned getting away from GDAL in a previous project. What are your thoughts on GDAL's role in geospatial python moving forward, and how will pygeoapi accommodate that?

- Never, ever works with the wheels!

- Kitware has some pre-compiled wheels as well: https://github.com/girder/large_image

- In the pangeo.io project, our go to tools are geopandas for tabular geospatial data, xarray/rioxarray for n-dimensional array data, dask for parallelization, and holoviz for interactive visualization. We use the conda-forge channel pretty much exclusively to build out environments

- If you work on Windows, good luck getting the Python gdal/geos-based tools installed without Conda

- data formats and standards are what make it difficult to get away from GDAL -- it just supports so many different backends! Picking those apart and cutting legacy formats or developing more modular tools to deal with each of those things "natively" in python would be required to get away from the large dependency on something like GDAL.

- Sustainability and maintainability is always good to ask yourself "how easy will it be to replace this dependency when it no longer works?"

- No one should build gdal alone (unless it is winter and you need a source of heat). Join us at https://github.com/conda-forge/gdal-feedstock

9 Mar 2023: "Meeting Data Where it Lives: the power of virtual access patterns"

Mike Johnson (Lynker, NOAA-affiliate) will rant and rave about the VRT and VSI (curl and S3) virtual data access patterns and how he's used them to work with LCMAP and 3DEP data in integrated climate and data analysis workflows.

Recording:

Minutes:

- VRT stands for "ViRTual"

- VSI stands for "Virtual System Interface"

- Framed by FAIR

LCMAP – requires fairly complex URLs to access specific data elements.

3DEP - need to understand tiling scheme to access data across domains.

Note some large packages (zip files) where only one small file is actually desired.

NWM datasets in NetCDF files that change name (with time step) daily as they are archived.

Implications for Findability, Availability, and Reuse – note that interoperability is actually pretty good once you have the data.

VRT: – an XML "metadata" wrapper around one or more tif files.

Use case 1: download all of 3DEP tiles and wrap in a VRT xml file.

- VRT has an overall aggregated grid "shape"

- Includes references to all the individual files.

- Can access the dataset through the vrt wrapper to work across all the times.

- Creates a seamless collection of subdatasets

- Major improvement to accessibility.

If you have to download the data is that "reuse" of the data??

VSI: – allows virtualization of data from remote resources available as a few protocols (S3/http/compressed)

Wide variety of GDAL utilities to access VSI files – zip, tar, 7zip

Use case 2: Access a tif file remotely without downloading all the data in the file.

- Uses vsi to access a single tif file

Use case 3: Use vsi within a vrt to remotely access contents of remote tif files.

- Note that the vrt file doesn't actually have to be local itself.

- If the tiles that the vrt points to update, the vrt will update by default.

- Can easily access and reuse data without actually copying it around.

Use case 4: OGR using vsi to access a shapefile in a tar.gz file remotely.

- Can create a nested url pattern to access contents of the tar.gz remotely.

Use case 5: NWM shortrange forecast of streamflow in a netcdf file.

- Appending "HDF5:" to the front of a vsicurl url allows access to a netcdf file directly.

- The access url pattern is SUPER tricky to get right.

Use case 5: "flat catalogs"

- Stores a flat (denormalized) table of data variables with the information required to construct URLs.

- Can search based on rudimentary metadata within the catalog.

- Can access and reuse data from any host in the same workflow.

Use case 6: access NWM current and archived data from a variety of cloud data stores.

- Leveraging the flat catalog content to fix up urls and data access nuances.

Flat catalog improves findability down at the level of individual data variables.

Take Aways / discussion:

Question about the flat catalog:

"Minimal set of shortcuts" to get at this fast access mechanism.

Is the flat catalog manually curated?

More or less – all are automated but some custom logic is required to add additional content.

Would be great to systematize creation of this flat catalog more broadly.

Question: Could some “examples” be posted either in this doc or elsewhere (or links to examples), for a beginner to copy/paste some code and see for themselves, begin to think about how we’d use this? Something super basic please.

GDAL documentation is good but doesn't have many examples.

climateR has a workflow that shows how the catalog was built.

What about authentication issues?

- S3 is handled at a session level.

- Earthengine can be handled similarly.

How much word of mouth or human-to-human interaction is required for the catalog.

- If there is a stable entrypoint (S3 bucket for example) some automation is possible.

- If entrypoints change, configuration needs to be changed based on human intervention.

9 Feb 2023: "February 2023 - Rants & Raves"

The conversation built on the "rants and raves" session from the 2023 January ESIP Meeting, starting with very short presentations and an in-depth discussion on interoperability and the Committee's next steps.

Recording:

Minutes:

- Mike Mahoney: Make Reproducibility Easy

- Dave Blodgett: FAIR data and Science Data Gateways

- Doug Fils: Web architecture and Semantic Web

- Megan Carter: Opening Doors for Collaboration

- Yuhan (Douglas) Rao: Where are we for AI-ready data?

I had a couple major take aways from the Winter Meeting:

- We have come a long way in IT interoperability but most of our tools are based on tried and true fundamentals. We should all know more about those fundamentals.

- There are a TON of unique entry points to things that, at the end of the day, do more or less the same thing. These are opportunities to work together and share tools.

- The “shiny object” is a great way to build enthusiasm and trigger ideas and we need to better capture that enthusiasm and grow some shared knowledge base.

So with that, I want to suggest three core activities:

- We seek out presentations that explore foundational aspects of interoperability. I want to help build an awareness of the basics that we all kind of know but either take for granted, haven’t learned yet, or straight up forgot.

- We ask for speakers to explore how a given solution fits into multiple domain’s information systems and to discuss the tension between the diversity of use cases that are accommodated by an IT solution targeted at interoperability. We are especially interested to learn about the expense / risk of adopting dependencies vs the efficiency that can be gained from adopting pre-built dependencies.

- We look for opportunities to take small but meaningful steps to record the core aspects of these sessions in the form of web resources like the ESIP wiki or even Wikipedia. On this front, we will aim to construct a summary wiki page from each meeting assembled from a working notes document and the presenting authors contribution.